Detecting Obstacles with Xbox Kinect

Test Set Up and Processing

The

obstacle blocks were set up in such a way that they face the Kinect

sensor and are always fully inside the field of view of the sensor. They

were also the nearest obstacles to the sensor. A depth map of the obstacles was acquired using the "kinect_test" MATLAB code listed below. The depth map is a 2D 640X480 array containing the distance to the obstacles detected. The array is saved in the workspace of MATLAB with variable name "depthFrameData". This array can directly be plotted but it contains data points other than the two block obstacles. Thus to further refine the data the following was implemented in "depth_analysis.m" matlab file.

First the array contained data points from the reflection off of the ground so that was filtered using a height threshold not to detect anything below a certain height. Second, only data points detected within a certain distance from the obstacles were kept while the rest were zeroed. These mechanisms helped isolate the blocks for accurate distance of separation measurement. Once the blocks are isolated the opening spaces along the mid-height of the blocks were measured and the location of the largest open space was determined. Now we have both the distance away from the sensor and the location of the open space relative to the sensor. These values were put in the variables "obstdepth" and "move" respectively. Then the values were passed on to lab view as follows.

The two distance and separation measurement values were captured from matlab workspace by a matlab code "LabVIEWMatlabComm.m" This matlab code gets the values and passes them to the labview code "LabVIEWMatlabComm.vi". Once the value is acquired by labview it can be used to direct the robot using long distance obstacle detection. All the code files used in this process are listed below.

To summarize image acquisition and processing steps:

a ) Run the "LabVIEWMatlabComm.vi" labview code. This will make the labview code wait for the matlab code to pass values.

b) Run the "caller_fun" matlab code that calls the "depth_analysis" and "LabVIEWMatlabComm" matlab files.

c) The "depth_analysis" matlab file calls "kinect_test" matlab file that tells the kinect camera to take a snap shot.

d) This snap shot with the depth map array in the work space is processed by the algorithm in "depth_analysis" matlab file and the distance and separation values are updated in the matlab workspace.

e) The above values are then captured by the "LabVIEWMatlabComm" matlab file and passed to "LabVIEWMatlabComm.vi" labview code to be used for the robot navigation.

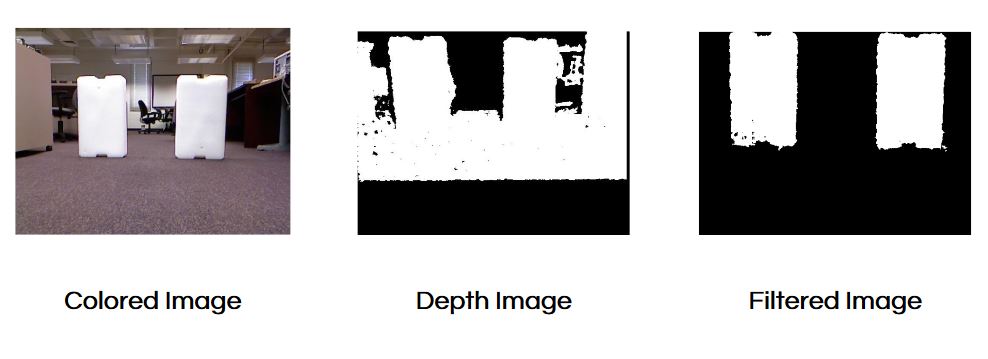

The color image and the depth map of a sample snap shot is shown below.

First the array contained data points from the reflection off of the ground so that was filtered using a height threshold not to detect anything below a certain height. Second, only data points detected within a certain distance from the obstacles were kept while the rest were zeroed. These mechanisms helped isolate the blocks for accurate distance of separation measurement. Once the blocks are isolated the opening spaces along the mid-height of the blocks were measured and the location of the largest open space was determined. Now we have both the distance away from the sensor and the location of the open space relative to the sensor. These values were put in the variables "obstdepth" and "move" respectively. Then the values were passed on to lab view as follows.

The two distance and separation measurement values were captured from matlab workspace by a matlab code "LabVIEWMatlabComm.m" This matlab code gets the values and passes them to the labview code "LabVIEWMatlabComm.vi". Once the value is acquired by labview it can be used to direct the robot using long distance obstacle detection. All the code files used in this process are listed below.

To summarize image acquisition and processing steps:

a ) Run the "LabVIEWMatlabComm.vi" labview code. This will make the labview code wait for the matlab code to pass values.

b) Run the "caller_fun" matlab code that calls the "depth_analysis" and "LabVIEWMatlabComm" matlab files.

c) The "depth_analysis" matlab file calls "kinect_test" matlab file that tells the kinect camera to take a snap shot.

d) This snap shot with the depth map array in the work space is processed by the algorithm in "depth_analysis" matlab file and the distance and separation values are updated in the matlab workspace.

e) The above values are then captured by the "LabVIEWMatlabComm" matlab file and passed to "LabVIEWMatlabComm.vi" labview code to be used for the robot navigation.

The color image and the depth map of a sample snap shot is shown below.

Current Shrcomings of the MATLAB Code

One major difficulty is in accurately filtering the reflection from the floor. As can be seen in the depth image of the above picture the ground shows up as an obstacle and prevents accurate obstacle detection. The filtering mechanism works some time but at other times it fails to remove the ground properly which could lead to wring calculation. In addition the current algorithm works on a simple assumption that the block are the nearest obstacles. Moreover, the code isn't suited to finding open spaces between irregular objects. Other than detection and analysis shortcomings the matlab labview communication codes "LabVIEWMatlabComm.m" and "LabVIEWMatlabComm.vi" fail to update values correctly when they are called in a loop.

Therefore, in order to improve the obstacle detection and processing mechanism one has to develop a stronger algorithm that works well with different condition and passes values correctly to labview.

Therefore, in order to improve the obstacle detection and processing mechanism one has to develop a stronger algorithm that works well with different condition and passes values correctly to labview.